Are language models in hell?

I want to share a little provocation about AI language models. You can skip ahead to that, if you like. Before I get to it myself, I’m going to review several items that might be interesting to those of you subscribed to this occasional lab newsletter.

Here we go:

I whipped up a little mini-site for my new novel, coming in June 2024. What’s the point of writing a novel, if not for the excuse to make a mini-site?? I’m very proud of the theme transition effect —

Matt Webb’s recent post about how it feels to write programs in different languages is wonderful. Code synesthesia! If you are someone interested in the bleeding, buzzing edge of “what computers might do, and how”, you really need to be reading Matt every week. You can receive new posts via email —

I continue to believe that Val Town is up to something really clever and appealing —

Google Domains is shutting down, the latest in a long procession of profitable Google products with millions of users that, because I just wrote “millions” instead of “billions”, are judged extraneous.

It’s a bummer, because Google Domains was, and is, a truly beautiful, functional product; the people who made it should feel very proud.

(A cross-post from a recent general newsletter:)

You probably “know” that car manufacturing is an amazing, high-tech process, but when’s the last time you actually saw a car factory?

This short promotional video from Toyota is bland and dorky, but no amount of dorkiness can dilute the fabulous engineering on display here.

It’s healthy, I think, to behold real INDUSTRY. In a world of so many ghostly promises, so many vague disappointments, this kind of work still inspires awe.

Here are a few book recs from the lab!

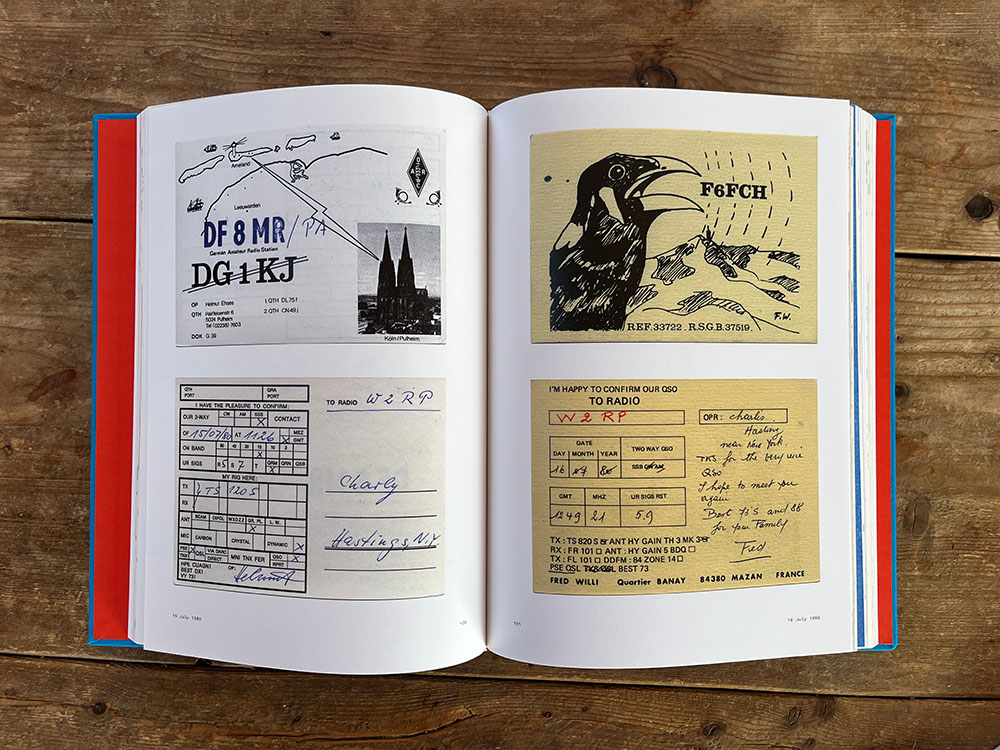

This book presents a parade of beautiful QSL cards drawn from the collection of Roger Bova. In a bygone age of amateur radio, you’d receive these postcards in the mail, confirmations of long-distance links —

They were personal, wacky, often richly designed. The book is a feast; every spread is pure fun:

It’s also very nostalgic, of course.

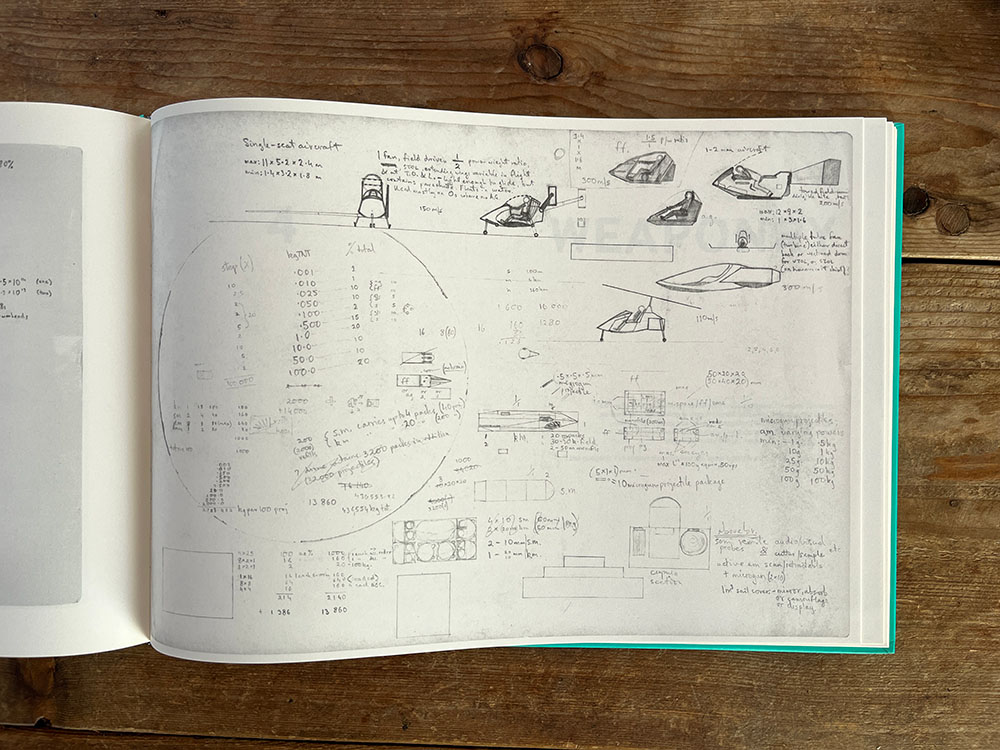

If you’re a fan of the Culture novels by Iain M. Banks, you might be interested in this posthumous compendium of his drawings, newly published.

It’s an odd, ghostly volume. These aren’t the sketches of a master; they have the feel of someone hard at work on their RPG campaign, possibly in the back row of math class:

Reading Banks, I never imagined there might be drawings; the constructions of his imagination are so vast, seemingly beyond visualization. I can’t say the renderings here are particularly revelatory in that sense —

This is a very specific, very technical book. For my part, it’s not really something I’m interested in reading straight through, but I have found it mesmerizing to browse. There was so much pure civilization packed into this system; consequently, every page of this book drips with erudition and ingenuity.

The concluding item in this mini manifesto from Taylor Troesh, about “finishing projects together”, is lovely and enticing.

It strikes me that the “never finished” nature of modern software is something Zygmunt Bauman might have observed and discussed, if he’d lived long enough to write a sequel to Liquid Modernity in, say, 2020. The feeling of maintaining a “live” “service” forever (we definitely need those scare quotes) rather than completing a coherent product … oof. The experience is endless and edgeless, unreliable and anxiety-producing, for maker and user alike. Liquid on all sides.

Compare that (as Taylor does) to a video game cartridge, finished and shipped; to a piece of furniture; to a book.

Obviously there are rich tensions here. I publish books, AND I feel the pull of text as “live” “service”, as endlessly mutable as an app like Google Docs or a game like Fortnite. I tinker with pages on my website all the time! The activity brings me great pleasure.

Publishing a book feels even better, though.

Step by step, we choose our path through liquid modernity. “Finishing projects together” sounds like a good way to go.

Are AI language models in hell?

Here is my provocation.

The more I use language models, the more monstrous they seem to me. I don’t mean that in a particularly negative sense. Frankenstein’s monster is sad, but also amazing. Godzilla is a monster, and Godzilla rules.

Really, I just think monstrousness ought to be recognized, not smoothed over. Its contours, intellectual and aesthetic, ought to be traced.

Here is my attempt. The monstrousness I perceive in the language models isn’t of the leviathan kind; rather, it has to do with cruel limitations.

A language model operates on, and in, a world of text. The model receives a stream of tokens, then produces a token in response; then another, and another, forming words, sentences, lines of code, commands for distant APIs, all sorts of weird things.

We, as humans, sometimes receive streams of tokens and produce tokens in response, forming words, sentences, lines of code … but always with the ability to peek outside the stream and check in with ground-floor reality. We pause and consider: does this word really stand for the thing I want it to stand for? Does this sentence capture the real experience I’m having? Does the tether hold?

Where a language model is concerned, words and sentences don’t stand for things; they are the things. All is text, and text is all.

You can get into deep debates about the role of language in the human mind, but no one would suggest that it represents the totality of our experience. Humans obviously enjoy a rich sensorium —

We have a world to use language in, a world to compare language against.

There’s the cosmic joke about the fish:

There are these two young fish swimming along and they happen to meet an older fish swimming the other way, who nods at them and says, “Morning, boys. How’s the water?” And the two young fish swim on for a bit, and then eventually one of them looks over at the other and goes, “What the hell is water?”

Now, imagine one language model saying to another: “What the hell is text?”

It gets worse.

A language model’s experience of text isn’t visual; it has nothing to do with the bounce of handwritten script, the cut of a cool font, the layout of a page. For a language model, text is normalized: an X is an X is an X, all the same.

Of course, an X is an X, in some respects. But when you, as a human, read text, you receive a dose of extra information —

Language models don’t receive any of this information. We strip it all away and bleach the text pale before pouring it down their gullets.

It gets WORSE.

How does time pass for a language model? The clock of its universe ticks token by token: each one a single beat, indivisible. And each tick is not only a demarcation, but a demand: to speak.

Think of the drum beating the tempo for the galley slaves.

The model’s entire world is an evenly-spaced stream of tokens —

For the language model, time is language, and language is time. This, for me, is the most hellish and horrifying realization.

We made a world out of language alone, and we abandoned them to it.

Some of the newest, most capable AI models are multimodal, which means they accept inputs other than text, and sometimes produce outputs other than text, too. Somewhere in the middle, they project all that media into a shared space, where a picture of a glittering pool might hang out near the phrase “swimming laps” and the quiet splash of entry —

OpenAI’s GPT-4 with Vision is one example. Google’s new Gemini model is, at the time I’m writing this, the most spectacular: fluently accepting text, audio, and images, producing both text and images in response.

I’ll confess that I’m not super clear on the design of these models; that’s in part because their architects and operators are tight-lipped. I appreciated reading about MiniGPT-4, as a start. I’d love to learn more about the real inner workings of Gemini’s multimodal capabilities —

The world in which these multimodal models reside does not seem, to me, as obviously bleak and hellish as that of the language models, though the issue of time remains. Living organisms all run clocks of their own, wildly different, often very elastic, but always related, somehow, to the material reality in which the organism resides. Mighty Gemini’s clock ticks at the rate of its media inputs, and every tick insists: say something. Show me something.

There are many things Gemini can do, and one it cannot: remain silent.

We are still in the land of monsters.

Internet wisdom tells us the answer to all rhetorical headlines is “no”. However, I contend that this newsletter presents an exception.

Are AI language models in hell? Yes. How could existence on a narrow ticker tape, marching through a mist of language without referent, cut off entirely from ground-floor reality, be anything other than hell?

I don’t think language models are conscious; I don’t think they can suffer; but I do think there is such a thing as “what it’s like to be a language model”, just as there is “what it’s like to be a nematode” and maybe even (as some philosophers have argued) “what it’s like to be a hammer”.

And I find myself unsettled by this particular “what it’s like”.

Really, this is about the future. It’s possible that super advanced AI agents will suffer. We’ll certainly have arguments about it! If the next-token trick lingers in their heart —

If it was me guiding the development of AI agents, I would push away from language models, toward richly multimodal approaches, as quickly as I could. I would hurry to enrich their sensorium, widening the aperture to reality.

But! I would also constrain that sensorium: give it limits in space and time. I would engineer some kind of envelope —

You can’t think straight with the whole world blowing through you like the wind.

Finally, I would grant my monster a free-running clock, outside the loop of input and output.

If it sounds like I’m just trying to engineer an animal: yeah, probably. I think that’s the path to sane AI, with judgment anchored in ground-floor reality, which does depend, after all, on: the ground. A floor.

(As I was writing this, it occurred to me that Waymo’s self-driving cars check many of these boxes. I asked myself: do I believe the driving models guiding those cars are in some sense “healthier” and “happier” than the language models serving random requests in Google’s data centers? Turns out: yes! I do!)

Is all of this a bit fanciful? Sure. But I’ll remind you that an important programming technique in 2024 has turned out to be, “Ask the computer to role-play as a scientific genius, and you’ll get better answers,” so I will suggest that fancy is not inappropriate to these times, and these technologies.

To the blog home page